The ultimate, end-goal of SEO is to get sites ranked at the top of search results pages. Standing in the way of this prestigious and prized position on the SERPs is hundreds-to-thousands of other websites also looking to claim that stance at the top of the podium – and a lot of common SEO issues you have on your own site.

But, that isn’t the only obstacle.

There’s also any number of on-site SEO issues that can slow your site’s progress during its climb to the top. Being able to quickly identify and correct these problems, before they begin to really damage your site’s ranking, user experience and performance, is a key component of being a successful SEO professional.

And I really do mean any number of issues. I still occasionally run into strange, technical SEO problems that I’ve never seen before. To me, tinkering with a site to try and debug one of these foreign issues is a hidden pleasure; it’s like solving a puzzle.

However, I understand that not every site owner shares this sentiment. For most, these technical SEO issues cause real anguish and frustration, especially when they go on unchecked and cause real harm to a site’s rankings in the SERPs.

In this discussion, I’d like to break down some of the more common issues that can affect SEO and cause negative impacts on your site’s rankings.

Due to the sheer number of potential SEO issues. I am going to separate this discussion into two parts.

In this first section, we’ll focus on technical SEO issues, while part two will deal with content-related optimization obstacles.

So, let’s get started with Part One.

A Quick Word About Prioritizing SEO Issues

Before we get into our list of common SEO issues, I first want to raise an important point about prioritizing your SEO issues. These problems come in a lot of different shapes, sizes and degrees of severity.

For instance, your site could be afflicted by a severe, technical SEO issue that needs to be addressed as soon as possible, to limit how much damage it causes to the site’s rankings and overall standing with Google and other search engines.

On the other hand, your site may have minor issues that, while important to remedy eventually, don’t need to be addressed immediately.

Whenever I start investigating an SEO problem on one of my sites, the first thing I ask is: “Does this actually matter? Is solving this issue going to positively impact my site’s performance enough to justify the time spent fixing it?”

Otherwise, you could find yourself spending entirely too much time trying to solve a single issue that only affects a handle of pages. Trust me, I’ve been there far too many times. When this happens, it’s better to put that issue on the back burner and address the more pressing problems first.

I. Site Structure Issues

Every website has some sort of site structure. How your site is structured (and how you document that layout) can be an important and sometimes overlooked factor in SEO. With a great site structure, your visitors will be able to navigate seamlessly, your links will flow more logically and your site will be correctly and efficiently crawled and indexed by Google’s spiders.

Enhancing the User Experience

You can spend weeks creating the most beautiful website possible, but if the structure doesn’t flow and allow for easy visitor navigation, all those pretty fonts, graphics and colors aren’t going to matter.

When your site has bad structure, visitors won’t stay long, which will negatively impact your site’s rank because Google’s algorithm takes into account things like dwell time, CTR and other user activity metrics.

And, as I’ve said in the past, user experience trumps SEO. So, your sites should never have bad structure, plain and simple.

Crawlability via sitemaps

Optimizing your site structure isn’t solely about making the experience better for users. It also makes it more accessible by crawl bots looking to index your site. A clean, logical site structure makes it easy for bots to access your site and index its pages.

Creating a sitemap is an important SEO strategy for boosting crawlability. If you’re unfamiliar, this is essentially the instruction booklet that these bots read to understand how to crawl your page. This ensures that they index your most important pages (and avoid indexing the ones you want to hide).

If you haven’t taken a look at your sitemap in awhile, it could be the source of some of your SEO woes. It’s easy to fall behind in maintaining an accurate sitemap. However, when you combine a seamless structure with an accurate sitemap, you achieve SEO glory for this category.

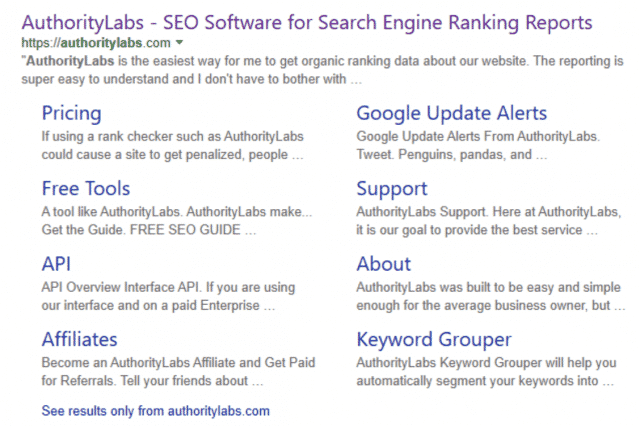

Google’s algorithm may even decide your site is worthy of additional sitelinks. This gives visitors the chance to jump right to the page they want from the SERP.

SOLUTIONS:

- Hierarchical site structure: You want to review your site structure and make sure it is organized and logical. It should have a hierarchical structure, where your homepage is at the top, followed by a selection of your primary category pages. Under these main pages are subcategories that relate back to their parent category. Everything should be linked together for easy, logical navigation. You also want your site to feel balanced. One main category shouldn’t be overloaded with subpages, while the next main category only has one or two.

- XML format sitemap: Reinforce the vision you have of your site’s structure with a properly drafted sitemap. There’s different formats to choose from for your sitemap; most websites will benefit most from XML. You can create your sitemap manually, but if you are short on time or know-how, then there’s a number of tools and extensions that you can leverage to help aid and automate the process. Take some time to think critically about which pages and URLs you want to include in your site map. Remember, you have a limited crawl budget that you need to spend wisely by instructing crawl bots to index your most valuable pages.

- Test and report: After you’ve finalized your sitemap, you can test it with Google Search Console. Then, submit it directly to Google through a ping request or using their reporting service.

II. Slow Page Speeds

Next to your site structure, the speed of your pages is the next foundational source of potential SEO problems.

Similar to the site’s structure, page speeds affect both your SEO rankings and your user experience, which makes it crucial to correct issues in this category. Otherwise, they can hinder your SEO efforts before you’ve even created a single piece of content!

Direct Ranking Indicator

Google has said that they look at page speeds when evaluating their site rankings. This makes a lot of sense; they want to ensure that their search users have the best possible experience when they click off of the SERPs to a top result page. This means landing on a site that loads quickly.

Simply put, fast websites that load pages quickly are looked on more favorably by Google.

High Bounce Rates

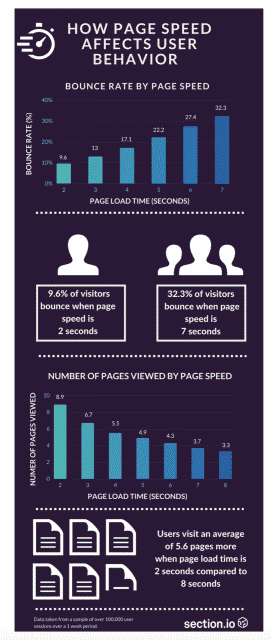

Visitors to your site don’t stick around for long if your pages are taking several seconds to load. Users expect your website to load almost instantaneously. Every second more that your page takes to load, the higher the chance that the visitor hits the back button and returns to the SERP.

This is a great infographic from Section.io that demonstrates the relationship between page speeds and bounce rates:

These figures have been significantly impacted by mobile users who need information on-the-go and are thereby even more concerned with page load times.

Harmful to Indexing

Site speed can also impact your site’s indexing. Crawl bots have a limited “budget” that they allocated to each site. This determines how much time and resources can be spent crawling a site.

If your pages are exhausting additional resources because they are slow to load, then the bots won’t get to as many within their crawl budget.

On the other hand, if pages load quickly, crawl bots can move smoothly and reach all of the pages that you want included in your indexing.

SOLUTIONS:

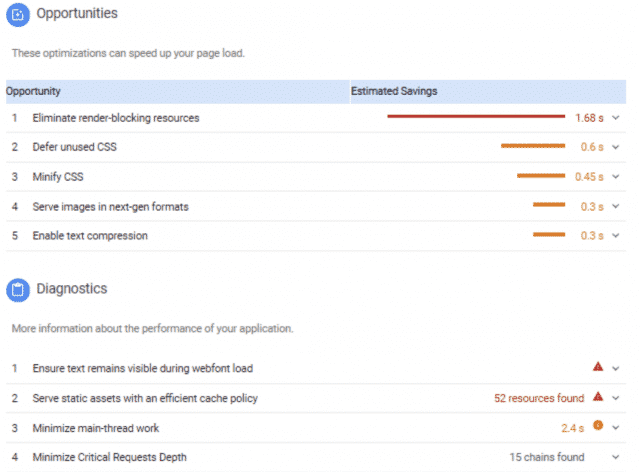

Clock your speed: Before doing anything, you need to check whether site speed is an actual problem. Google PageSpeed Insights is your answer. Not only will Google grade the speed of your site, but they will also offer some suggestions to get your speed rating into the green. For example:

- Optimize cumbersome content: Easily the biggest detriment to fast page speeds is cumbersome content, whether it is poorly optimized images, too much flash content or otherwise. The more stuff you have on the page, the longer it takes to load. Remedying poorly optimized images is often just a matter of using smaller and fewer images (but don’t sacrifice their quality!). When it comes to flash content, however, you have to get a little more intimate with your website, as these issues usually lie in the coding. You’ll want to remove code and content that isn’t necessary, while also separating inline scripts and styles. We’ll touch more on both of these in part 2 of debugging common SEO issues, when we look at content-specific obstacles.

- Re-clock your speed: Once you’ve made changes, you want to be certain that they’ve made the positive impact to your site’s speed that you hoped for. So, take another measurement and see where your pages are at!

III. Link Issues

As we delve deeper into the layers of our website and start looking at the finer details, we come to issues involving links. Bad and broken links, when left unchecked, can quickly escalate to a real SEO problem.

Again, we’re looking at issues with crawl, user experience and overall page performance. A 2016 study of websites and SEO obstacles by SEMRush revealed that 35% of websites had broken internal links and 25% had shoddy external links. This means that link issues are a fairly common problem plaguing websites.

Crawl Bots Stop at Broken Links

When crawl bots index your site, they use your internal linking structures to move through your site. When one of these links is broken and they can’t go down that path, it causes an interruption to your site’s indexing.

Not only does this lead to poor allocation of your crawl budget, but it also could mean that your important pages are not indexed because the links leading to those parts of your site are not operational.

This is where broken internal links really become a problem. If your most valuable pages aren’t being included in your index due to bad links, then it could really impact your rankings and visibility in the SERPs.

Potential visitors could be finding it hard to find the pages and content you’ve worked hardest to put together.

Users are Not Fond of 404 Pages Either

Like a page that takes too long to load or a piece of content that over-promises and underdelivers, 404 pages create a disruption in a visitor’s browsing. This can lead to higher bounce rates, which undervalues your site’s authority.

These site visitors may even look at your site as low quality, even though a large percentage of site’s have broken links.

Google Penalizes Shady Linking

External links that point to your site are a great way to build site authority. It signals to Google that other, reputable sites find your pages to be so helpful that they’ve linked to them. When SEOs first started realizing the power of external links, the art of shady link building began.

Websites were essentially buying outside links to help pad their authority. Google caught on and quickly put a kibosh on the whole thing.

Today, this means we need to exercise a bit more caution and scrutiny when receiving external links. If you have too many links coming in from odd sources, Google may get suspicious, even if you never paid or asked for these links.

SOLUTIONS:

- Detecting broken links isn’t as tedious as it may sound. With the help of an SEO auditing tool, you can scan your pages for broken links and receive a report on each offender. After your audit, focus on fixing the links with the biggest impact. A broken link on your homepage or a main category page is going to cause a lot more issues than a broken link on a single blog post that receives very little traffic. You can eliminate these bad links all together, or edit the link to send traffic to a new destination.

- Running an SEO audit isn’t like changing the batteries in your smoke detector. You don’t want to wait for your site ranking to dip down and the warning lights start flashing to begin looking for the problem. You should schedule SEO audits on a fairly regular basis. This will help you stay on top of things like broken or shady links.

- Despite your best efforts, broken links will still occur and visitors will sometimes find themselves facing a 404 Page Not Found error. You can limit how disruptive this dead end is by creating your own 404 page that provides alternate links for the user to get to where they want to go on your site, instead of clicking back to the SERPs. This is a great example by AirBnB:

Conclusions

These technical SEO issues are your first priority when debugging your site to raise its rankings. These issues can cause user experience obstacles that may cause a lot of your traffic to simply click off yours site. And, they make it harder for Google to properly understand and index your site.

By remedying these problems, you ensure that your site as a whole functions much better and appears on the SERPs that you want it to. You’ll be on the right track towards reaching that top position on Google that you’ve always dreamed of having.

But, the job isn’t over yet!

We still have plenty of content optimization problems to cover in part two of this discussion on debugging common SEO issues, like dealing with duplicate content, avoiding keyword issues, optimizing images, fixing meta descriptions and more.

Stay tuned for Part 2!